How A/B Testing Can Increase Revenue and Conversion Rates For Your eCommerce Store

A blog post explaining what A/B testing is and how crucial it is for improving your eCommerce store to increase conversion rates and revenue.

If you're not already doing a/b testing on your eCommerce store, you're leaving a lot of money on the table. To give you an idea of the scale in which this form of testing is being popularised, by 2025, the global testing software market is projected to be worth $1.08 billion.

This form of testing is a simple yet incredibly powerful tool that can help you increase revenue and conversion rates. By split-testing different elements on your store (such as headlines, images, call to action buttons, etc.), you can figure out which versions are most effective at getting visitors to take the desired action.

This theory of testing is most commonly used by online businesses, but it can also be used offline. For example, a retail store could test two different layouts for its store to see which one results in more sales.

Not only does this method save you from guessing what works best, it also allows you to constantly improve your store's performance over time. And the best part is that once you have a winning combination, it can be replicated across all your marketing channels.

However, there are a few pitfalls to be aware of when using this method. First of all, this form of website testing can be expensive and time-consuming. You need to have enough traffic to your site to make a meaningful comparison, and you need to be prepared to run the test for a long period of time.

Secondly, A/B testing can sometimes produce inconclusive results. This is because there are a lot of variables that can affect conversion rates, and it can be difficult to isolate the effect of a single change.

In reality its about commitment and consistency of the process. It's about measuring impact one variation to the next.

Let's dive in.

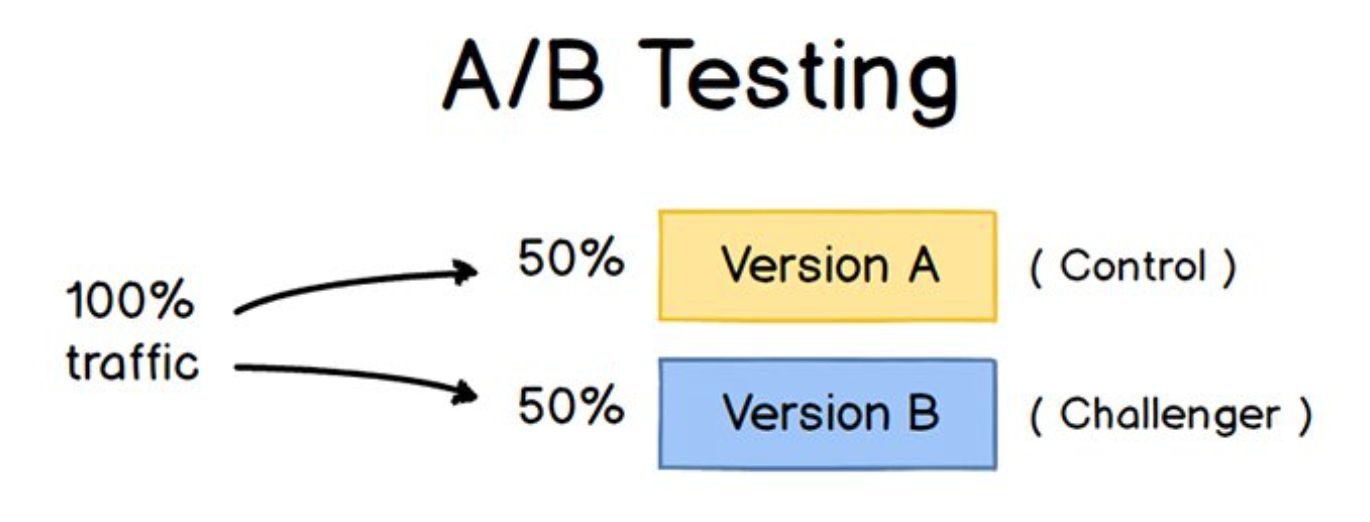

What Is A/B Testing?

This is a method of experimentation used to compare two versions of a web page or app in order to determine which one performs better. This could be in terms of conversion rate (the percentage of visitors who take a desired action, such as making a purchase) or revenue (the total amount of money earned from sales).

It is considered an essential tool for any business that wants to optimise its online presence and maximise its chances of success.

By testing different versions of a web page or app, businesses can learn what works best for their particular audience and make changes accordingly. The beauty of testing is that it doesn't require a large investment of time or funds - all you need is a willingness to experiment and a tool to help you track your results.

Does It Really Help To Increase Conversion Rates?

You bet it does! And not just conversion rate, but recurring revenue too. When successfully implemented, site testing can pay off in dividends. VWO reports that average transaction size increases $3 per visitor, for eCommerce websites who utilise testing on an ongoing basis.

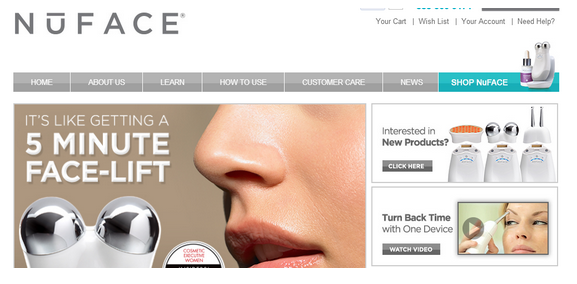

NuFACE is an online anti-aging skin company, that was searching for ways to boost their online presence and revenue. They conducted testing with the hypotheses that "offering an incentive (free shipping) to all orders over $75, would increase conversions".

Version A (below) had no option for free shipping.

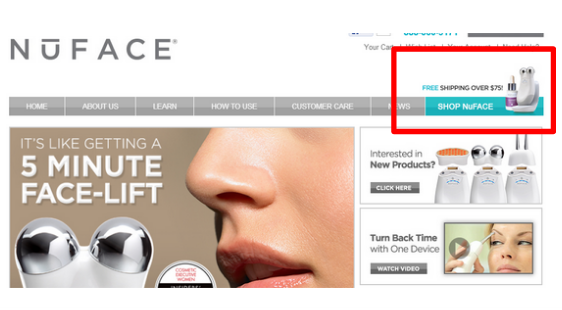

Version B (below) offered free shipping over $75.

What was the end result? Adding free shipping increased orders by 90% and the average order value by 7.32%.

The result is that customers felt increased incentive to add more items to their shopping cart, just to activate the free shipping offer.

This is just one example of how easy and incredible this form of testing is.

What Can You Test On Your Website?

In all honesty, just about any element of a site can be tested. You might not consider some elements worth the effort, however you would be surprised just how successful some companies are just by setting their focus on perfecting user experiences.

When it comes to testing, there are a few key elements that can have a big impact on your conversion rate and overall revenue. Here are a few of the most important elements to consider testing:

- The headline or title of your page

- The images you decide to use

- Copy or text forming website content

- The call-to-action (CTA) including button shape, placement and text used

- The overall layout and design of the page

- The checkout process

- Testimonials and social proof

Current statistics are quite fascinating - one third of all testing starts with the call the action button, 20% begin with the headline, 10% dissect the layout and 8% focus on website copy.

By testing different versions of these elements, you can gradually improve your conversion rate and increase your revenue. So don't be afraid to experiment and get your hands dirty!

The First Steps: Element Choice And Metrics

When it comes to testing, there are a few key steps involved in order to see success.

It's worth keeping in mind that you only need to know the general principles of testing, in order to apply it across various devices - there is no difference between desktop and mobile. This makes it so much easier to test various platforms.

First, you need to identify what areas of your website or app you want to test. This could be anything from the copy on a landing page to the design of a button. Spend some time thinking this through carefully.

Once you've identified what you want to test, you need to set up your test by creating two versions of the element - version A and version B. One will remain the control, and the other will become the treatment.

Next, you'll need to determine what metric you want to track . This could be anything from clicks to conversion rates.

There is a big difference between clicks and conversion rates in a/b testing. Clicks only measure how often someone clicks on a link, while conversion rates measure how often those clicks result in a desired action, such as a purchase or sign-up.

Conversion rates are much more important than clicks when it comes to gauging the success of an a/b test, honestly. That's because they directly impact revenue. If the a/b test increases conversion rates, it means more people are taking the desired action, which translates into more money for your business. So if you're looking to boost your bottom line, focus on conversion rates rather than clicks. After all, it's what really matters in the end.

Conversion rate is just one metric, and it's important to consider other factors such as customer satisfaction and average order value when making decisions about your site. So while this form of testing can be a useful tool, it's important to be aware of its limitations too.

The Importance Of Randomisation

Harvard business review discuss how crucial the role of randomisation is throughout this process.

Think about an eCommerce store that chooses to evaluate the size of a certain button. Those on a mobile device are more likely to click on a different size, compared to those sitting on desktop.

Leaving the visitor-to-page-version allocation to randomisation, means that you minimise the chances of external factors such as the availability of a desktop computer, skewing the test results.

What About Sample Size?

When it comes to any type of website testing, sample size is everything. The larger it is, the more accurate your results will be.

But how big does the sample size need to be? The answer depends on a few factors, including your conversion rate and your desired level of confidence. But in general, you'll need a minimum of 100 conversions per variant to get reliable results. So, if you're testing two variations of a page, you'll need a minimum of 200 total conversions.

Of course, the bigger the better. If you can afford to test with a larger sample size, you'll be able to get more accurate results. But even a small populations can give you valuable insights if you know how to interpret the data correctly.

50/50 Split Testing

Using a true 50/50 split, is the fastest and most accurate way of conducting a/b testing.

Technically you can split your traffic in a variety of other ways, including 80/20 and 70/30 splits. But unequal split testing does complicate things considerably - as it renders more uncertain results and it takes longer to reach a statistically significant conclusion.

Things To Factor In

Another important factor to consider is the expected conversion rate. If your goal is to increase conversion rates by 10%, you'll need a much larger cohort than if your goal is to increase them by 2%. This is because it's easier to detect small changes when the overall conversion rate is higher.

Finally, the level of precision you need will also affect the required sample size. If you're aiming for a high level of precision (for example, a 95% confidence interval), you'll need a larger sample size than if you're aiming for a lower level of precision (such as a 80% confidence interval).

How Large Does Your Sample Size Need To Be?

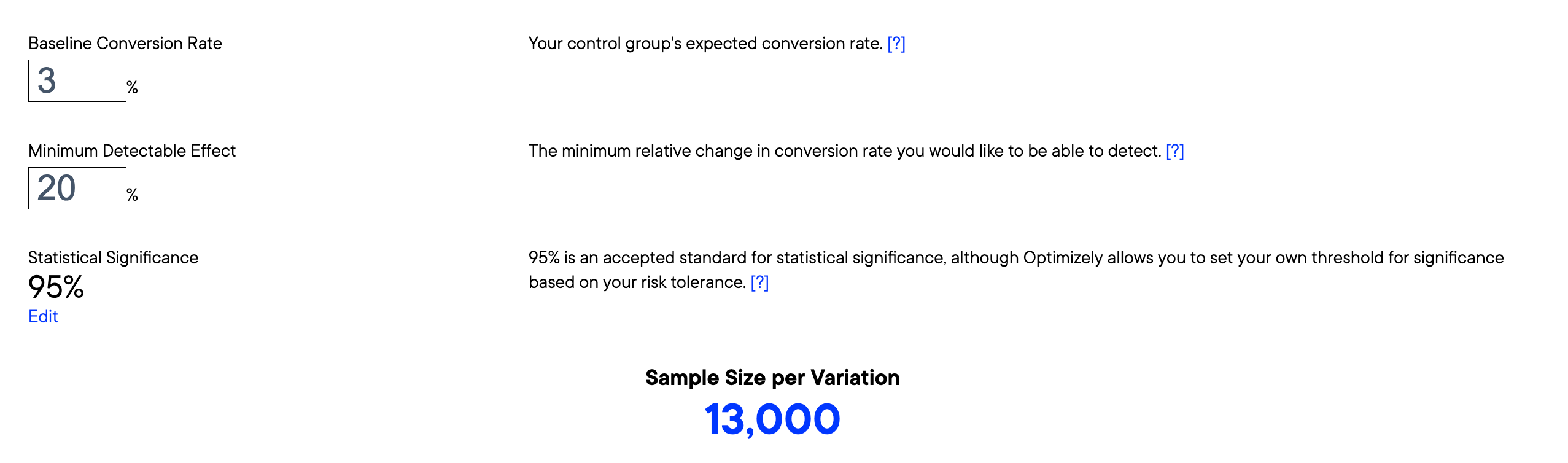

There are a few different ways to calculate it, but the most important thing is to make sure that your sample size is adequate for your goals. If you're not sure where to start, there are a few online calculators that can help you determine an appropriate group for your a/b test. Just enter in the relevant information (such as conversion rate and desired confidence interval) and it will do the rest. Don't leave this part to random chance.

How Long Should The Test Run For?

There's no hard and fast rule with anything in a/b testing.

The end goal is to apply the test to a representative sample, that reflects your site visitors.

It's recommended that you complete testing for two business cycles or two full weeks (and a maximum of four), for an accurate snapshot.

This way, you are mitigating the following external factors:

- The affect of day of the week. Most eCommerce stores find that they have fluctuations in traffic across the week. Testing for two whole weeks captures the natural ebb and flow of visitation.

- Return visitors. Testing for a longer period of time, gives you the chance to capture return customers or those that were initially contemplating, and have now come back to make the final purchase.

Final Steps: Interpretation Of The Test Results

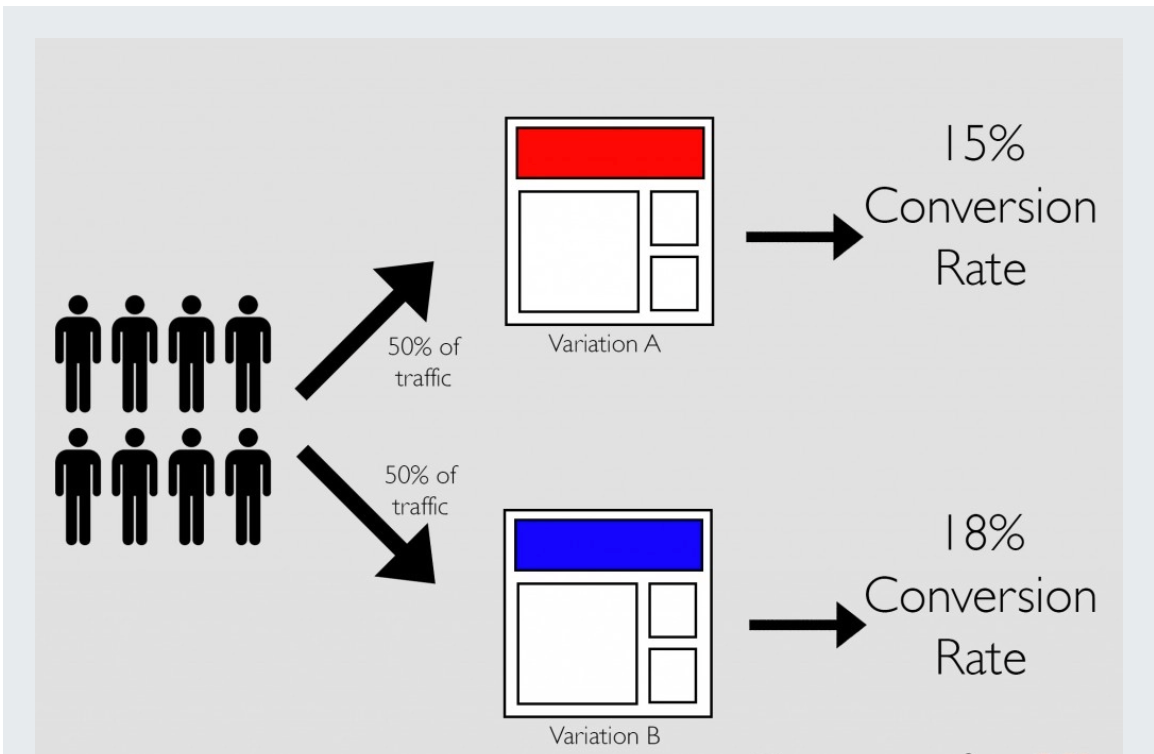

After a sufficient number of online visitors has been directed to the page, you'll be able to see how the two versions compare in terms of the metric you're tracking.

Statistical significance is a key concept in all forms of testing. It's a measure of how likely it is that a given result is due to chance.

For example, let's say you are testing two different versions of a web page to see which one converts better. Version A has a conversion rate of 5%, while version B has a conversion rate of 10%.

At first glance, it might seem like version B is the clear winner. But we can't just stop there! We need to calculate the statistical significance of this difference to be sure.

If the difference between the two conversion rates is statistically significant, that means it's unlikely to have happened by chance. In other words, version B is probably a better performing page than version A. If the difference isn't statistically significant, it could be due to chance, or there may not be enough data to say for sure.

There are a few different statistical tests that can be used to determine whether a difference is statistically significant. The most common is the chi-squared test, but there are also t-tests and z-tests.

If the results of your a/b test show that the treatment outperforms the control, you can implement the changes on your live site or app. If the results are not significant, you can try a different version of the treatment or a different metric.

Essential Tools

There are a number of different tools available to assist with a/b testing. Some of the more popular options include Google Analytics, Optimizely, and Visual Website Optimizer.

Each of these tools has its own advantages and disadvantages, so it's important to choose the one that's right for your specific needs. For example, Google Analytics is a great option if you're looking for a free tool with a lot of features. Optimizely is a good choice if you need a tool that's easy to use and comes with a lot of internal support. Visual Website Optimizer is a good option if you're looking for a tool that's affordable and offers a lot of flexibility.

Final eCommerce Pearls

With so many different techniques out there, it can be tough to know which ones are the best to use in order to get the most bang for your buck.

That's why we've put together a list of the best practice recommendations for testing, so you can make sure you're using the most effective techniques possible.

- Test one element at a time. Changing too many variables at once can lead to confusing results and make it difficult to determine what actually works.

- Set up a solid test plan before you start

- Make sure you have enough site visitors to get reliable results. Dont rely on small sample sizes.

- Keep an eye on your metrics throughout the testing

- Be patient. Testing takes time, so don’t expect to see results overnight. Be patient and give each test a fair chance to run its course.

- Follow up on all results. Just because a test is over doesn’t mean you should forget about it. Follow up with your team and discuss what worked, what didn’t, and how you can apply the lessons learned to future tests.

- Dont take shortcuts. Avoid cloaking, which is the use of one website content for human viewership and another for robots to view. Doing this will have a negative impact on SEO and potentially a demotion within Google and its search-engine ranking system.

- Enable forward thinking. Use a temporary (302) URL redirect (instead of a permanent 301), since it is only a limited-time experiment.

- Be prepared to implement the winning variation and make sure you take statistical significance into account before making any decisions.

The Take Home Message

When used correctly, a/b testing can be a powerful way to improve your website's performance and increase revenue. By testing different versions of your site and measuring the results, you can determine which changes are most effective in achieving your desired goals. This is how successful business is done.

However, is not a magic bullet, and it's important to understand the limitations of this approach before you implement it on your own site. If you're not careful, you can easily waste time and funds on tests that don't produce meaningful results.

Starting with this blog post, draw on all the resources you can to better understand it in it's simplest form. If you focus on carefully designing and tracking your a/b tests, you can make data-driven decisions that will improve your bottom line.

What's next?

We're well aware this is a lot of information to take in, and can be quite difficult to execute correctly if you haven't done or two A/B tests before.

If you're unsure what to do next, get in touch for a discovery call.

We offer an audit, where we review your eCommerce store and give many actionable tips to take your eCommerce store to the next level.

Don't just read about better software. Build it.

Australia's only Premier Laravel Partner. We design, build, and scale Laravel applications for businesses that need it done right.

Related Articles

How to crete a landng page

Craft high-converting landing pages like a pro with our comprehensive guide.

How to crete a landng page

Craft high-converting landing pages like a pro with our comprehensive guide.

How to crete a landng page

Craft high-converting landing pages like a pro with our comprehensive guide.